Can you spot the difference between AI and lab data?

Play our game to see if you can tell whether datasets are from GEM-1, the lab, or the clinic!

At Synthesize Bio, our goal is to use AI to accurately predict the results of future or even impossible experiments. But what does “accurately predict” mean?

We put a lot of thought into this question. In fact, we tackled it before we even started model building in earnest.

We decided that an excellent model should be accurate from two very different perspectives. First, it should be accurate at a high level. The whole transcriptomes of AI- and lab-generated data should be as similar as possible. Second, it should be accurate at a very fine-grained level. Individual genes must be regulated just as experts would expect.

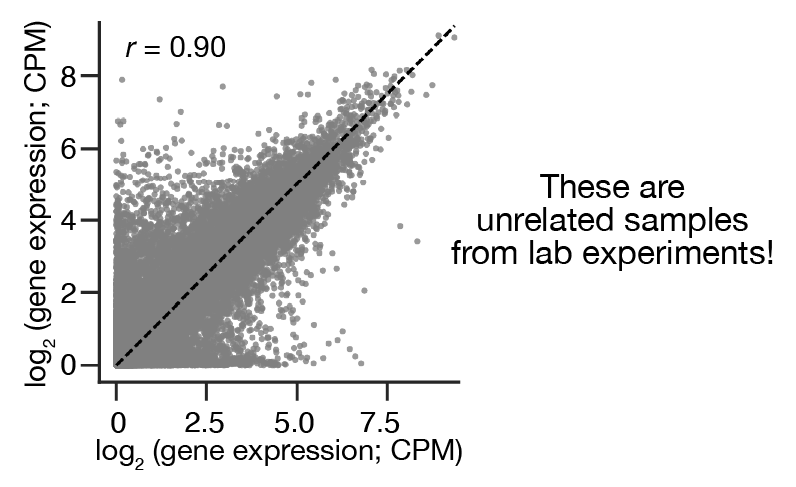

Meaningfully assessing high-level accuracy was surprisingly tricky. The transcriptomes of even unrelated biological samples are well correlated due to the strongly structured nature of gene expression:

So, we created new statistics that normalized out this similarity. Check out Figure 2 in our recent preprint for the details.

We came up with a game to address the fine-grained perspective! (Kudos to Greg Koytiger, our Head of AI, for this idea.) We picked a lab dataset, generated a parallel AI dataset, and then asked people whether they could tell the difference. Two years ago, the difference was obvious to everybody. As we improved our models, the difference became more subtle, until a memorable day when nobody we asked could tell.

So – can YOU tell which dataset is from the lab and which is from GEM-1?

Thanks for playing our game. You can also try GEM-1 on your most important scientific problem. Give it a try via our web platform or Python and R APIs and let us know how your experiments go!